|

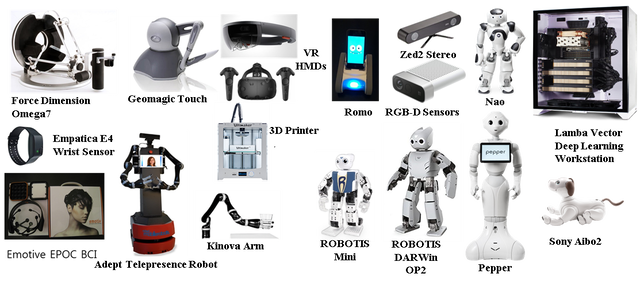

Robotic Platforms

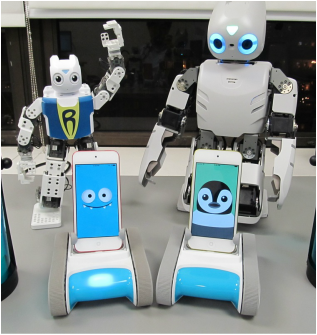

The ART-Med lab. has multiple robotic platforms for research projects focused on tele-manipulation, mobile navigation, and human-robot interaction. Below are the list of robotic platforms:

The ART-Med lab also provides diverse multi-modal sensors for effective and creative human-robot interaction, including the followings:

Haptic and Augmented-Reality (AR) Interfaces The following haptic interfaces and AR devices is equipped for interactive multi-modal communication for human users.

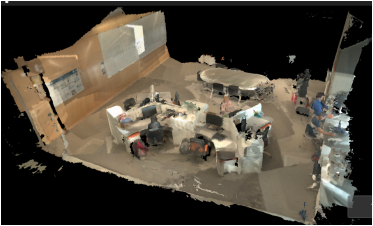

Computing and Prototyping Facility Multiple computing platforms with diverse operating system support (Windows, Mac, and Linux) are provided in the ART-Med lab., with prototyping support and embedded systems.

|

|